DisplayPort Vs HDMI – which display interface is better?

Comparing the two audio/video interfaces to see which is best for certain scenarios

WePC is reader-supported. When you buy through links on our site, we may earn an affiliate commission. Prices subject to change. Learn more

When you think of a display interface cable, chances are that you think of HDMI or the DisplayPort – two commonly used inputs for audio-video content. While both HDMI and DisplayPort perform similar functions, there are quite a few differences between them.

Manufacturers have been making use of the HDMI input for years now, allowing the high-speed transfer of both audio and video from a source device to a display. It’s everywhere – monitors, TVs, media streamers, Blu-Ray players, A/V receivers, gaming consoles, and even digital cameras. If it’s a consumer electronic device, chances are it’ll have HDMI support.

The DisplayPort – often named the gamer’s input – is another option that manufacturers can utilize for their input/output requirements. Funnily, the DisplayPort actually outperforms the HDMI (in some way) when compared to the two on a strict spec-by-spec basis.

In the following article, we’ll be taking a closer look at the two major audio/video standards to see how they compare. We’ll be answering all the pressing questions surrounding the subject, concluding with which is better for your needs as both a gamer and a general user.

HDMI – what is it, and how does it work?

For all other usage scenarios, the HDMI standard still reigns champion. With constant updates allowing the HDMI interface to remain relevant, it has been the go-to audio/video interface for well over 16 years now.

Historically, HDMI standards have been worse when compared to DisplayPorts. That being said, real-world usage has put them on a level playing field – with 24-bit color at 4K 60hz being supported by HDMI 2.0 since 2013. Until some new technology becomes available that offers crazy resolutions at stupidly high refresh rates, there’s really no difference between the two standards.

AMD GPU owners will also be pleased to hear that HDMI has supported VRR (via an AMD extension) since 2.0b. That being said, only now, at HDMI 2.1, are we seeing VRR become part of the official standard.

The main benefit of utilizing an HDMI connection all comes down to its versatility. The HDMI connection is fully ubiquitous – utilized in millions of devices since its arrival back in 2002. TVs, Blu-ray players, and all other kinds of consumer electronics now offer multiple HDMI inputs – meaning you can hook several devices up to one display. Furthermore, TV manufacturers are already starting to roll out HDMI 2.1 products for the upcoming change – with LG having an HDMI 2.1 OLED TV on the market since 2019.

Unlike DisplayPort, HDMI doesn’t have the same sizing issues to deal with. There are 15m HDMI cables available for purchase right now – exceeding the maximum length of DisplayPort by five times. That being said, on paper, they still offer a lot less bandwidth than the alternative DisplayPort standard.

DisplayPort – what is it, and how does it work?

Ultimately, the Displayport is the gamer’s choice when it comes to input options. At present, DisplayPort 1.4 is the most commonly used and readily available version of that particular standard. Despite DisplayPort 2.0 being ‘released’ in the summer of 2019, there still aren’t any GPUs or displays that actually utilize this new iteration – something I didn’t think would be the case after Nvidia’s 30-series and AMD’s Big Navi launches. That being said, both opted to stick with the DisplayPort 1.4a variant, allowing for the transfer of 8K at 60Hz with DPC – more than enough for today’s standards.

That being said, there are still some fairly obvious advantages to be gained from using DisplayPort over the current HDMI standard. Alongside its longstanding relationship with VRR (FreeSync & G-Sync), DisplayPort also offers a much more robust connection when compared to HDMI.

At the base of the connector, the DisplayPort has two prongs that enable it to lock into place – requiring a button to be pressed upon release. The same can’t be said for HDMI cables – often being pulled out when moving or changing monitors regularly. More impressive, however, is DisplayPort’s ability to support up to four displays (at any given time) via multi-stream transport.

One downside to the usage of DisplayPort is the maximum length that is available – 3m at the time of writing this. This doesn’t really bode too well for the general consumer of electronics goods and is definitely something that should be looked at by DisplayPort manufacturers.

DisplayPort vs HDMI – what’s the difference?

Before we go into the details, let’s take a look at some very obvious differences between HDMI and DisplayPort.

| HDMI | DisplayPort |

| Can carry audio and video | Mainly carries video but can carry audio |

| Launched in 2002 | Launched in 2006 |

| 19-pin connector | 20-pin connector |

| Mainly used in TVs, streaming devices, consoles, laptops, and other similar consumer electronics | Mostly used in PCs and monitors |

| Comes with features like ARC, ethernet, CEC | Comes with features like adaptive sync support, MST for daisy-chaining |

| Affordable | Slightly expensive |

Different versions of HDMI and DisplayPort

Connectors

Taking a quick look at the physical connectors of both DisplayPort and HDMI, there are some obvious differences to be found.

The HDMI connector has 19 pins and comes in three different sizes – all utilized by a variety of unique devices. Type A (Standard) is what you’re likely to see on most TVs, Soundbars, consoles, and other larger A/V systems. Type C (mini) and Type D (micro) are usually found on smaller devices like mobile phones, dashcams, and tablets.

DisplayPort is made up of 20 pins and is only available in two different sizes, DisplayPort and Mini DisplayPort. The first is what you’ll see on modern GPUs; the second is more commonly seen on Microsoft’s Surface Pro and Apple Macs before the arrival of USB Type-C/Thunderbolt 3.

Both HDMI and DisplayPort come in a variety of different forms. Interestingly, proprietary locking methods have been adopted by manufacturers to help keep each cable in place – especially useful when using the cable in a vertical fashion (monitors, for example).

Bandwidth

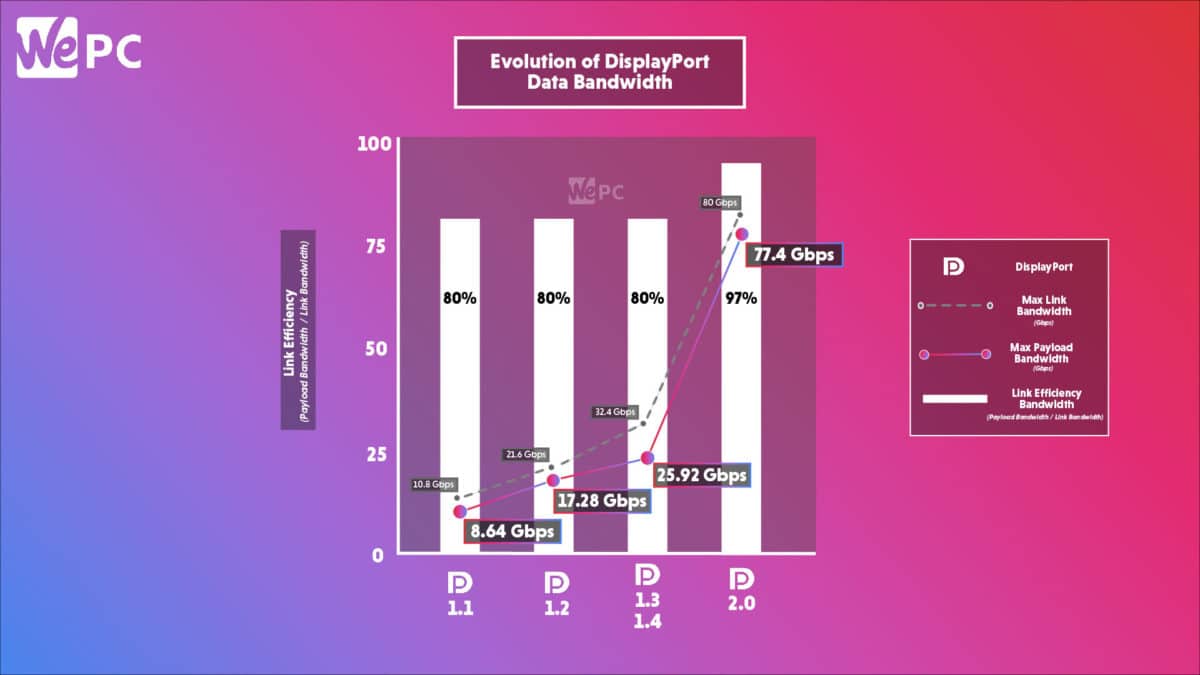

As you can see from the table above, both HDMI and DisplayPort have hugely widened their transfer limits since their arrival on the market. The more recent revision of HDMI (2.1) is now capable of supporting bit rates up to an impressive 48Gbps. In contrast, DisplayPort 2.0 offers up to 80Gbps – equating to 4K at 240Hz.

Please note, though, that the displayed 48Gbps and 80Gbps bandwidth limits do refer to raw delivery speeds only. In theory, only 80% of that is actually used for the transfer of data – with the other 20% being used to maintain a steady connection. That being said, it’s still a huge leap forward when compared to older revisions of both standards – so all mainstream TV and GPU products are future-proofed.

Compatibility

Let’s compare HDMI and DisplayPort based on their compatibility. HDMI was originally made for TVs, but several consumer-grade AV devices support HDMI (like consoles, laptops, etc). So, if you want to plug into any display, HDMI is the easiest and most accessible way.

In comparison, DisplayPort was mainly meant for computers, so you will find this port mostly on PCs and laptops. At the moment, there are rarely any TVs that have a DisplayPort. Gaming consoles don’t usually have one, either.

Is DisplayPort sound quality better than HDMI?

In terms of sound quality, there is not much difference between HDMI and DisplayPort, as both formats can carry high-quality audio. Typically, they can carry up to eight channels of uncompressed audio at a 192kHz sampling rate, so both can deliver surround sound. However, HDMI has ARC and HDMI-CEC, which makes HDMI a simpler setup for sound. Meanwhile, DisplayPort may need additional setup and configuration for audio output.

Is HDMI 2.1 or DisplayPort 1.4 better?

The choice between HDMI 2.1 and DisplayPort 1.4 will depend on your requirements, preferences, and budget.

Theoretically, HDMI 2.1 offers more bandwidth for high-resolution pictures and uncompressed audio. While HDMI 2.1 allows for future-proofing, hardware compatibility can be an issue. On the other hand, DisplayPort 1.4 has wider compatibility, and it is usually affordable, which makes it a great choice for PC users. However, it might limit your scope for future-proofing.

Is DisplayPort better than HDMI for 144Hz?

For a 144Hz refresh rate, you can use both HDMI or DisplayPort – so the choice will depend on your requirements, preferences, and budget. While both are capable of giving you a 144Hz refresh rate, DisplayPort might give you a slight edge.

The DisplayPort 1.2 and the later versions natively support 144Hz for FHD and QHD resolutions – so you won’t need any additional setup. Meanwhile, HDMI 2.0 can support 144Hz @ 1440p, but it might not be supported on ALL devices. You might need to configure your devices to achieve this refresh rate.

Conclusion

If we look at HDMI 2.1 and DisplayPort 1.4, the latest iterations of the two connectors, the “right” choice will depend on your requirements, the devices you have, and your preferences. If you want the highest possible resolution, connection to multiple displays, and future-proofing, then HDMI 2.1 is a good choice. However, if you are looking for wider compatibility, good performance, and everyday use, then DisplayPort 1.4 is the way to go.